Hi Dave,

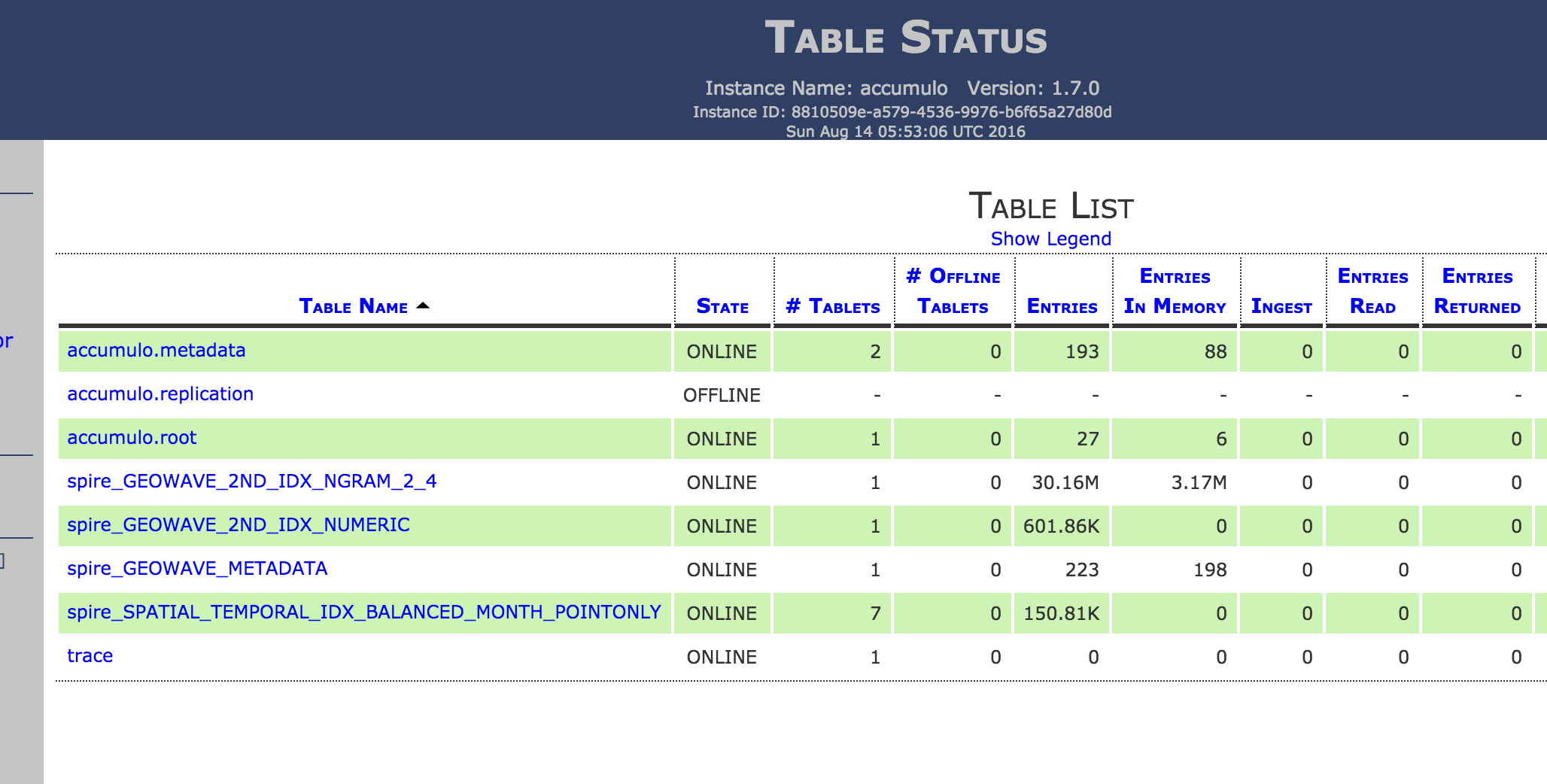

There are a couple things you can do that should dramatically improve your experience. First of all, you can ingest with multiple indices and it will choose the appropriate index for a query, but if you are only using a spatial index then it is using the spatial index. You can see this if you look at the Accumulo monitor web interface while panning the map there is a column showing the active scans.

The first one is quite simple and will probably be the biggest benefit. While, the cluster is very small, it seems like you are running into a natural bottleneck in rendering a map with a million+ points. GeoWave can return points to GeoServer much more rapidly than rendering them on a map. To work around this bottleneck, GeoWave has various tricks. In your case the best one to employ is GeoWave's ability to use the bounds of map requests to translate a pixel to GeoWave's internal spatial index and then GeoWave will skip returning data from the tablet servers once a point that satisfies the filters is found for a given pixel bounds. Therefore, when interacting with a map with millions or more points, there is a cap on how much work GeoServer has to do based on pixels of the map request and GeoServer is no longer dealing with thousands or more overlapping points from Accumulo. To enable this, add a "rendering transformation" like this to your SLD:

"pixelSize" is an optional parameter that is a scale factor (considering the point size for rendering is typically multiple pixels its usually safe for this to be slightly greater than 1, we often use 1.5). You can use this transformation in combination with any stylization rules.

Regarding splits/partitions, recommendations are heavily based on use case and cluster size. When you configure your GeoWave index you can set `--numPartitions` which will set up random pre-splits on the table when you ingest (essentially randomly sharding your data). This will increase the performance of initial writing to Accumulo (otherwise you will rely on exceeding the tablet size and having Accumulo naturally split as you are ingesting a large amount of data). Regarding a recommendation on the number of splits, typically you want to have at least as many active tablets as the total number of cores in your cluster. But this can be achieved by decreasing the table split threshold also (table.split.threshold in accumulo shell, it defaults to 1 GB). Regarding recommendation of when to use pre-splits and when to rely on Accumulo naturally splitting as the threshold is exceeded, the pre-splits has a couple advantages in it will allow a single user to easily guarantee fully utilizing a cluster even when querying a small spatial extent because the splits are randomized. Pre-splitting will also allow ingest to immediately write to multiple nodes without needing to wait for the split threshold (although for best performance on a large scale ingest, bulk/offline ingest is recommended). For a multi-tenant environment in which concurrent users are querying different areas or if the use case is typically using large extents of data that should naturally span multiple nodes, the natural splits will preserve data locality best and should be more efficient.

Anyways, perhaps setting `--numPartitions` on your configured index is something worth playing with (you will need to re-ingest if you want to use this), or you can just decrease the split threshold and compact your existing spatial index table through the Accumulo shell to force more splits and more cluster utilization. My intuition is that you are just running into that rendering bottleneck and using the render transformation in your SLD will be the most critical change. Generally, that cluster is small (we typically use m4 xlarge instances for our small sandboxes) and if you want to see better performance just scale it a bit larger (the entire point of the technology). But in this case you should be able to play with your relatively tiny dataset and just configure it slightly different to see very reasonable performance.

Good luck and let us know how it goes!

Rich