I’d like to share an observation with this list which I did not expect - and worries me.

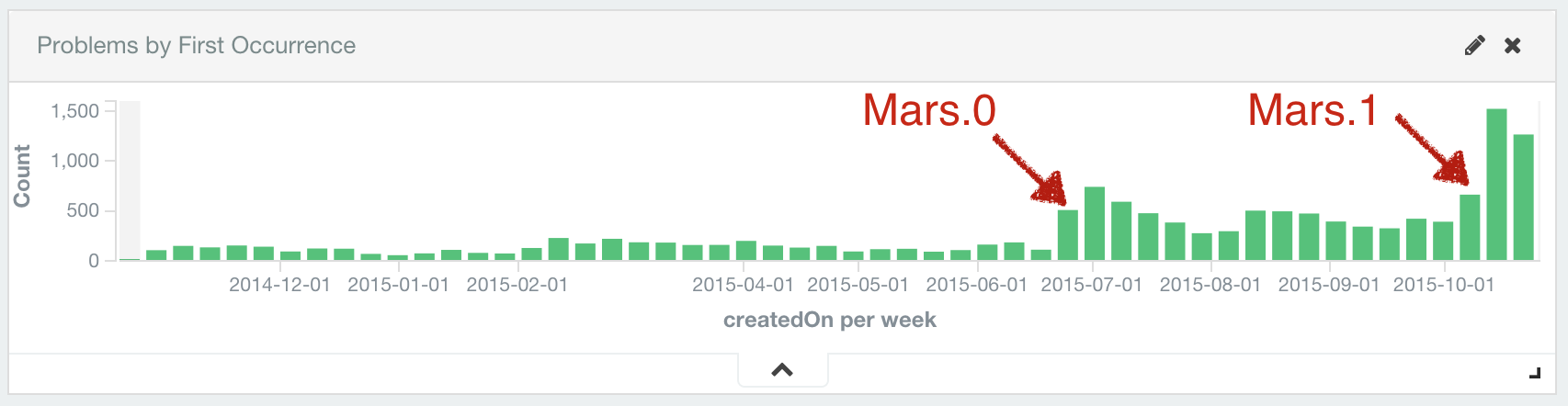

In Mars.0 this number went up to around 400-500 new observed problems per week in average.

Over the past year we received one million error reports from users.

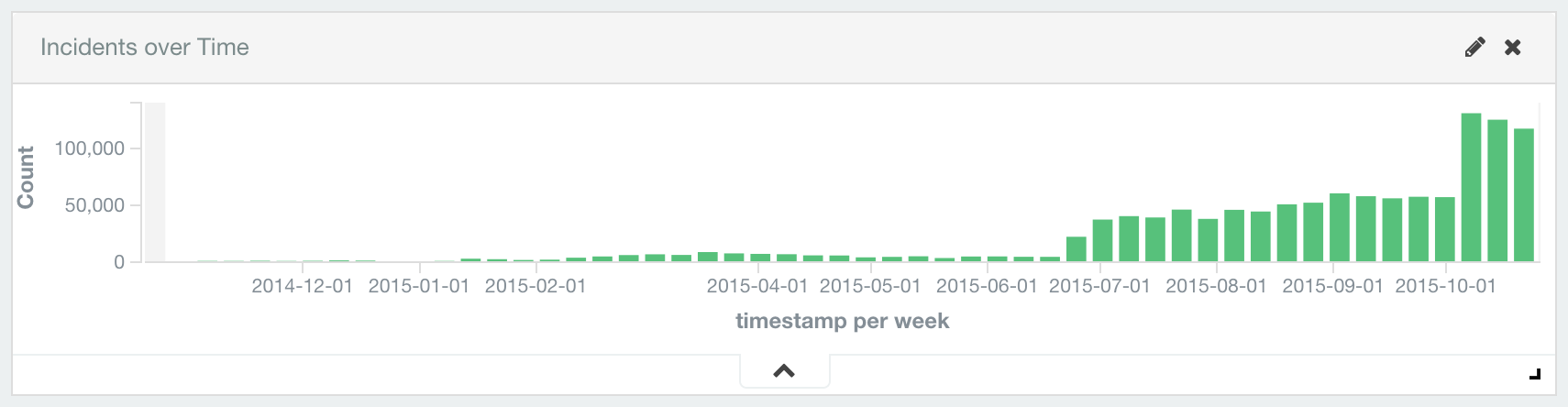

But since Mars.1 the number of reported errors doubled from around 55.000 per week to 120.000 per week.

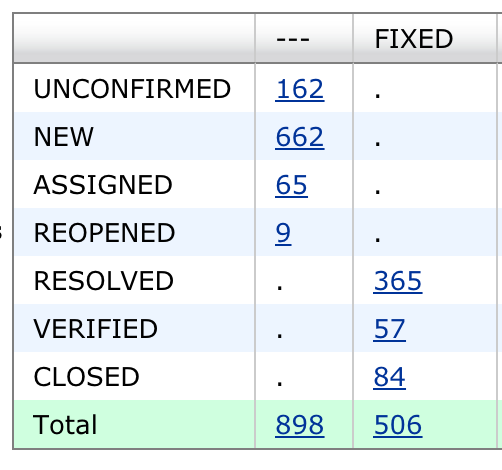

On the positive side, committers fixed 500+ bugs spotted by automated error reports. Another 900 problems are tracked im Bugzilla for triage:

I have no numbers how many bugs in general were fixed between Mars.0 and Mars.1. Certainly many more. But while the number of bugs in Bugzilla on its own doesn’t look bad, it seems that we failed to notably improve the quality of Mars.1 in the large.

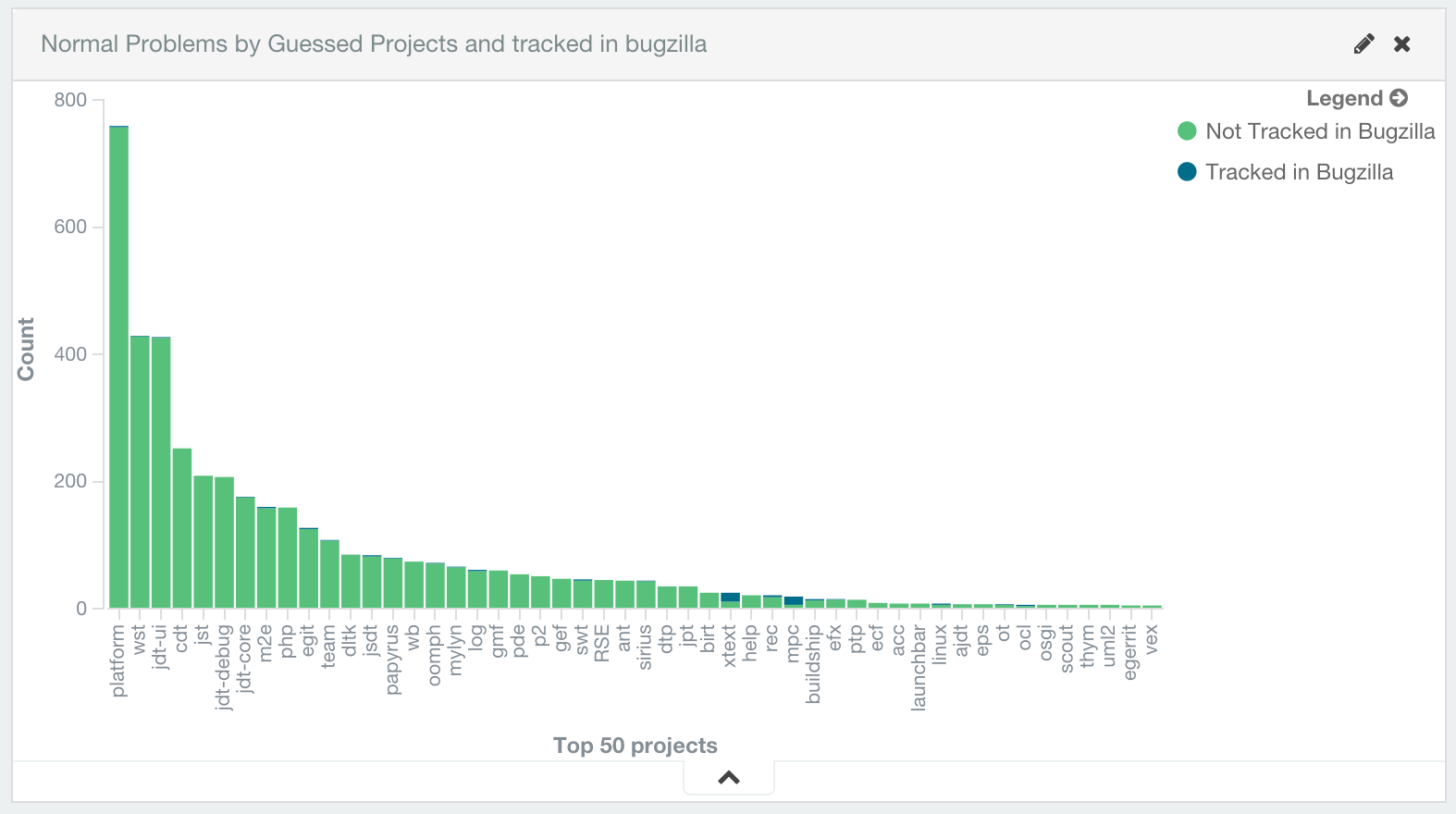

To make this more tangible, I created a chart of all new problems created in the last 30-days and which components are involved these error reports (see below).

As usual, I’ve to put disclaimers on all these stats that (i) AERI may inappropriately associate a problem with a project, (ii) some problems are rather log messages than real bugs etc. etc. If you think so, subtract whatever percentage from the overall number of error reports from your project 30-days total. Let me know if this changes the order of magnitude.

One further observation and personal note:

In the past several projects refused to review automated error reports because they considered them as not being helpful. Given that many of these projects are in this 30-days top 50, this may be a wake up call for them. Whether or not you consider automated error reports useful for your project doesn’t matter. But the number of problems your users notice should. Automated error reports are just a messenger.