Eclipse Science and Open Source Software for Quantum Computing

Introduction

Scientific computing and data mining are important tools that help us better understand nature and develop novel solutions to pressing problems in energy, health, logistics, and finance. Prominent examples include computational chemistry simulations to investigate new pharmaceuticals and long-range models to predict global climate change. These problems and others like them can only be solved using large-scale high-performance computing (HPC) resources. Ongoing advances in computing architectures support these large-scale scientific computing and data mining tasks, and recent trends in HPC system design focus on heterogeneous architectures that combine many CPU cores with other specialized accelerators. For example, the Titan supercomputer at Oak Ridge National Laboratory relies on many GPUs to implement high-performance numerical calculations.

Extensible, modular, and open-source software plays an important role in making heterogeneous HPC systems accessible to application developers. High-level programming models, software systems, and application programming interfaces (APIs) are necessary to use these large-scale scientific devices, which continue to push the scientific computing envelope. As the U.S. pushes towards the development of an HPC system that operates at exascale (or a machine that can execute a billion billion operations per second), there is also an effort to consider what heterogeneous HPC architectures are required to go beyond exascale. A number of research efforts across the world are beginning to demonstrate novel computing architectures that may aid accelerating a post-exascale computing world. One such effort at the forefront is quantum computing and the idea of leveraging the non-intuitive laws of quantum mechanics and quantum information to perform computation.

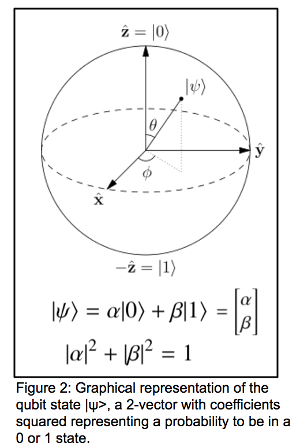

Quantum computing is fundamentally different from conventional computing where one considers operations of the binary units (bits) 0 and 1. A quantum computer operates on qubits - physical systems that are in one of two distinct states with a given probability. The spin of an electron (up or down) or the polarization of a photon (horizontally or vertically polarized) could be used as a qubit; although current state of the art qubit systems consist of superconducting, atomic, or optical setups. Graphically, the state of the qubit can be visualized as in Figure 1, where you have a vector that can point anywhere on the sphere of radius 1.

The power of quantum computation comes from this generalization of bits to qubits. Conventional computations are constrained to the space of bit strings, and computations are built from boolean primitives mapping bit strings to bit strings. However, quantum logical operations are unitary matrices rotating the quantum state (recall Figure 1) within an exponentially large vector space. A further consequence of the rapid (and different) growth of the quantum space is the existence of quantum entanglement, or a state that is not separable. This means that the state cannot be expressed as the states of the individual qubits. This differs greatly from binary logical states where the register is made up of a collection of independent bits. Quantum computations such as Shor’s algorithm for integer factoring and the simulation of quantum physics are performed in polynomial time by harnessing the quantum resources discussed above.

So how do we take advantage of this now, without waiting for a general purpose, stand-alone quantum computer? The answer is to accelerate an HPC system with small-scale quantum computers. An HPC system augmented with quantum resources may begin to help us tackle otherwise intractable problems. We can treat quantum processing units (QPUs) similar to GPUs in this regard - as acceleration units for existing scientific applications. Great strides have been made over the past decade in developing actual QPU hardware that, with a bit of algorithmic ingenuity, can be leveraged in a hybrid computing context. IBM has demonstrated (and publicly released) a 16-qubit QPU with a web portal for user access. Rigetti, Inc. is making similar strides in developing an 8-qubit QPU, D-Wave has produced a 2048-qubit quantum annealer, and Google is reportedly developing a 49-qubit QPU that will be released in late 2017 / early 2018.

With all these great hardware options, the real question now becomes, how can we provide a smart high-level software infrastructure that will judiciously off-load select computational tasks to an attached quantum accelerator? Such an infrastructure would necessarily expedite research efforts which would benefit from quantum acceleration in existing application workflows.

Eclipse Foundation, ORNL, and XACC

Oak Ridge National Laboratory has started investigating what it means to enhance an HPC system with quantum acceleration, and has put forth an open-source hybrid programming model and reference implementation called the eXtreme-scale ACCelerator programming framework, Eclipse XACC. The good news is that XACC is now a fully fledged Eclipse project, the first in an on-going effort of the Eclipse Science Working Group to drive open source software and community development around quantum computing - an exciting development for the early history of quantum computing software!

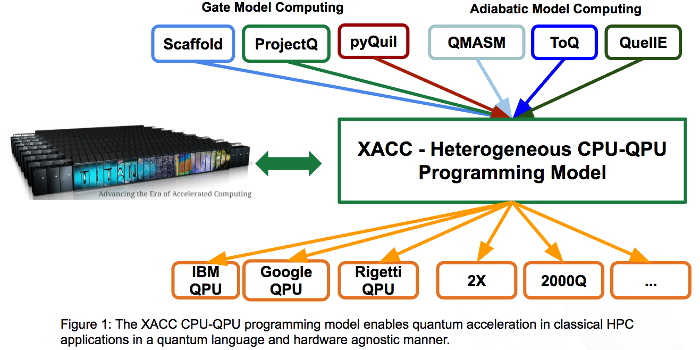

XACC has been specifically designed for enabling near-term quantum acceleration within existing high-performance computing applications and workflows. This programming model, and associated open-source reference implementation, follows the traditional co-processor model, akin to OpenCL or CUDA for GPUs, but takes into account the subtleties and complexities inherent in the interplay between conventional and quantum hardware. XACC provides a high-level API that enables software applications to offload quantum code (represented as quantum kernels) to an attached quantum accelerator in a manner that is agnostic to the quantum programming language and the quantum hardware. Figure 2 shows this graphically - the framework enables programming quantum kernels in any available language, and target execution of that code on any available hardware backend. This enables one to write quantum code once, and perform benchmarking, verification and validation, and performance studies for a set of virtual (simulators) or physical hardware.

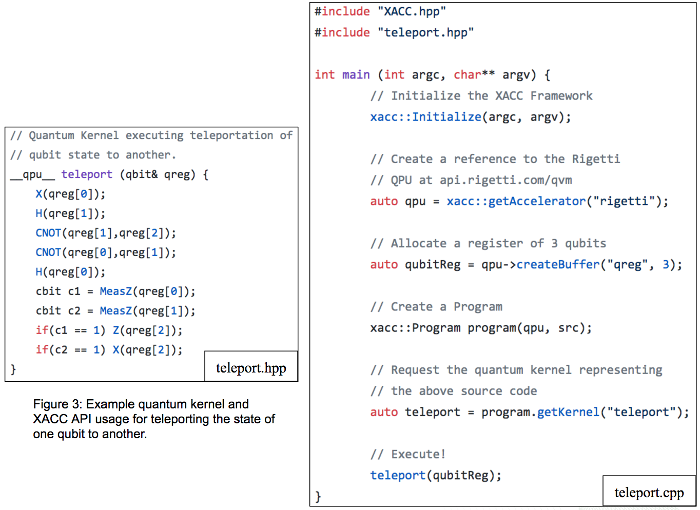

To achieve this interoperability, XACC defines four primary abstractions or concepts: quantum kernels, intermediate representation, compilers, and accelerators. Quantum kernels are C-like functions that contain code intended for execution on the QPU. These kernels are compiled to the XACC intermediate representation (IR), an object model critical to promoting the integration of a diverse set of languages and hardware. The IR provides four main forms for use by algorithm programmers: (1) an in-memory representation and API, (2) an on-disk persisted representation, (3) human-readable quantum assembly representation, and (4) a control flow graph or quantum circuit representation. This IR is produced by realizations of the XACC compiler interfaces, which delegates to the kernel language’s appropriate parser, compiler, and optimizer. Finally, XACC IR instances (and therefore programmed kernels) are executed by realizations of the Accelerator concept, which defines an interface for injecting physical or virtual quantum accelerators. Accelerators take this IR as input and delegate execution to vendor-supplied APIs for the QPU (or API for a simulator). The orchestration of these concepts enable an expressive API for quantum acceleration of scientific applications. Figure 3 demonstrates this API for a simple qubit state teleportation example. This teleport kernel (teleport.hpp) is written in the Scaffold quantum programming language, and is compiled and executed using the XACC API workflow (teleport.cpp) - (1) initialize the framework (this loads all available compilers, accelerators, etc.), (2) get reference to the desired Accelerator, (3) create a register of qubits, (4) construct a Program instance which orchestrates the compilation of the quantum kernel, and (5) get reference the executable kernel functor or lambda representing the compiled kernel code and execute it on the attached Accelerator.

XACC supports a number of languages and physical and virtual hardware instances. XACC provides a Compiler realization that enables quantum kernel programming in the C-like Scaffold programming language. This compiler leverages Clang/LLVM library extensions that extend the LLVM IR with quantum gate operations. XACC extends this compiler with support for new constructs, like custom quantum functions and source-to-source translations (mapping Scaffold to other languages). XACC provides an Accelerator realization that enables execution of quantum kernels in any available language for both the Rigetti Quantum Virtual Machine (QVM, Forest API) and a physical two qubit Rigetti QPU. These Accelerators map the XACC IR to Quil (the Rigetti low-level assembly language) and leverage an HTTP Rest client to post compiled quantum kernel code to the Rigetti QVM/QPU driver servers. XACC also supports the D-Wave QPU, which demonstrates the wide applicability of this heterogeneous hybrid programming model across quantum computing models (adiabatic/quantum annealing and gate model quantum computing). XACC has Compiler and Accelerator realizations that enable minor graph embedding of binary optimization problems and execution on the D-Wave Qubist QPU driver server, respectively.

XACC provides the base-level API that allows computational scientists to leverage quantum computing in a familiar accelerated-computing model fashion. It lays the foundation for higher-level data structures that provide an easy-to-use mechanism for accessing common quantum algorithms. At its core, it starts to provide an extensible software infrastructure that can act as the clue between all the great language and hardware implementations out there for quantum computing. Going forward, this project aims to provide a familiar mechanism for enhancing HPC applications with quantum acceleration, and providing a mechanism to program post-exascale computing technologies.

Acknowledgements

We want to gratefully acknowledge the funding source of this work, the ORNL Laboratory Directed Research and Development Fund. The author would also like to thank and call out the XACC team: Travis Humble, Eugene Dumitrescu, Dmitry Liakh, Mengsu Chen, Keith Britt, Timothy Goodrich, and Jay Billings. We would also like to thank Scott Jones, Communications Manager for the ORNL Computing and Computational Sciences Directorate, for providing thoughtful comments on this article.

Co-authors: Alex McCaskey, Travis Humble, Jay Billings, Eugene Dumitrescu, Dmitry Liakh, Mengsu Chen

About the Author